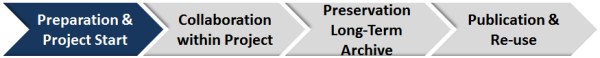

Data Management Wizard

Goal: Data Management = an obvious and integral part of research

You need a data management plan!

What is the name of your project?

Who is the financier – funding source?

Who controls and leads the project (core research - you, your group, your PI)?

- Who is responsible for which part of project data management?

What is the start and end date of your project?

Do you think about involving a professional data center?

Are there any already existing data relevant to your project?

The project might be enhanced by existing data

-

From where they were obtained and who owns them?

-

How are these available, which state and how can it be shared within the project?

-

Which types of input data will be used in your project?

-

How much storage space for input data do you need?

-

Resources needed? (if not sure, get professional help)

-

Who is responsible to manage the input data?

Which types of data will be used in your project?

sensor - instrument and source - platform description - structure - variable names - units - aggregation in time and space - experiment - coverage

Are there resources needed (conceptual development)?

- Do you need tools for production & processing of data and metadata?

For example:

Pre- and post-processing

Quality Control and Assurance – indication of missing values, documentation of procedure about sources of errors and deviation and rough errors

- Do you need tools for data and metadat management?

For example:

archiving of data and metadata

standardization of data and metadata + checker

identification – useable to find the data and metadata with description of data directory, IDs and checksums or Handle

access –authentification for data retrieval – who has access to which data during research?

version assignment

backup and recovery

task monitoring with result list of data with pointers to location

- Do you need tools for data visualization?

Do you have a data policy?

- Who owns the data?

-

Covering project internal handling and exchange of data

-

Covering long-term archiving and publication etc.

What are the costs?

- General

- Input Data

- Project Data

- Resources

- Long-term archiving

- Data publication

The project management should monitor production, exchange, and usage of data within the project

Usage of standards (content), controlled vocabularies

-

How are your controlled vocabulary documented?

-

Are your data self-explanatory in terms of structure and abbreviations used?

-

How will you label and organize data, records and files?

-

Are you using format standards and software enable long-term validity and archiving of data?

Data documentation

-

Does a project page exist?

-

Which description and documentation can explain the data and how is it shared?

- what your data mean

- how they were collected

- result list of data with pointers to location – description of data directory

- Data identifier with versions

- Infrastructure and tools for data held in various places, version management and replication?

- Methods used to create them

- Use of controlled vocabulary

- Quality assessment - do you have quality levels?

- Do you have a Project news, discussion and annotation area?

Production, processing, and flow of data (deadlines) – Quality Control (QC)/ Qualtiy Assessment (QA)?

-

What time schedules (data production/processing, ingest data and metadata, replication, quality control) do you have?

-

In depth planning amount of storage space (make clear how each part of your scientific problem translates into specific requirements for storage space.)

- How much of the data and at what growth rate?

- Will it change frequently?

- How long should it be retained?

- Do you need a backup?

- If your data are held in various places, how will you keep track of version and replication?

-

Do you know what the master version of your data files is (provenance)?

-

Do you need to anonymise data?

-

Do you control ?

- Production and processing status

- Data versioning

- Usage of control vocabulary

- Data availability with checksum + identifier simple as a file system or something more sophisticated (handle

- Consistency, accuracy and completeness of the data

- Sources of errors and deviation

-

How do you document your checks and annotations of users?

-

When converting data across formats for long-term archiving, do you check that no data or internal metadata have been lost or changed?

Meetings including data management topics?

Data can be long-term archived at any stage of your project

QA (role of project, role of data center), annotation, granularity (ingest units), formats, volumes etc.

Data submission:

-

Which data and metadata?

-

Which data center?

-

What is the data and metadata structure requested by the archive?

-

What is the data format requested by the archive?

-

Support needed?

Moratorium, licenses

After archiving: Conditions, e.g.:

-

How long should it be retained?

-

Who owns the data?

-

Who is responsible for the data?

-

Who is responsible for user support? (technical, scientific questions)

-

Is deletion of data possible?

By publishing your data with citation information you can signicantly increase the impact of your project

Who is your data publisher?

To view the list of KomFor data publisher follow this link.

Preparations for data to be published?

It belongs to the format curation and archive requirements. Ask the publication agent about authorship, quality assurance etc.

How is the data published?

To get answer to the question, go to pubish data

How can it be retrieved and used?

Learn more about FIND & USE DATA here or Use Data

What are the benefits?

Publishing data is a strong incentive for scientist to share their data and has positive effects.

Using the published data enables scientists comfortably to discover, find and access scientific data permanently via a persistent Identifier.

Data Publication Service extends these services by the following benefits:

- ensuring a high data quality and long-term usability

- ensuring a permanent and persistent access of data and metadata via the persistent identifiers

- enabling easy and clear data citations in scientific publications using the persistent identifiers for the credit of the data creators

- enabling availability and usability of article underlying data with data references

The impact on citation rates can be seen in the study on science article providing access to underlying data at the Smithsonian Astrophysical Observatory.

Articles with data links are cited more than articles without any links to research data.

The analysed articles acquired on average 20% more citations over a period of 10 years

Henneken, E. A., Accomazzi, A. (2011): Linking to Data - Effect on Citation Rates in Astronomy. http://arxiv.org/abs/1111.3618v1